The Reverse Turing Test for AI

Google Duplex has been described as the world's most lifelike chatbot. At the Google IO event in May 2018, Google revealed this extension of the Google Assistant that allows it to carry out natural conversations by mimicking human voice. Duplex is still in development and will receive further testing during summer 2018.

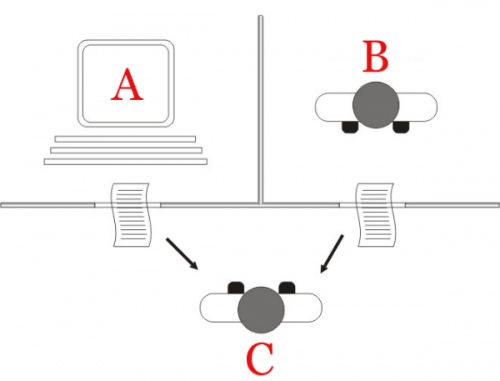

The assistant can autonomously complete tasks such as calling to book an appointment, making a restaurant reservation, or calling the library to verify their hours. Duplex can complete most tasks autonomously, it can also recognize situations that it is unable to complete and then signal a human operator to finish the task.

Duplex speaks in a more natural voice and language by incorporating "speech disfluencies" such as filler words like "hmm" and "uh" and using common phrases such as "mhm" and "gotcha." It also is programed to use a more human-like intonation and response latency.

Does this sound like a wonderful advancement in AI and language processing? Perhaps, but it has also been met with some criticism.

Are you familiar with the Turing Test? Developed by Alan Turing in 1950, it is a test of a machine's ability to exhibit intelligent behavior equivalent to, or indistinguishable from, that of a human. For example, when communicating with a machine via speech or text, can the human tell that the other participant is a machine? If the human can't tell that the interaction is with a machine, the machine passes the Turing Test.

Should a machine have to tell you if it's a machine? After the Duplex announcement, people started posting concerns about the ethical and societal questions of this use of artificial intelligence.

Privacy - a real hot button issue right now - is another concern. Your conversations with Duplex are recorded in order for the virtual assistant to analyze and respond. Google later issued a statement saying, "We are designing this feature with disclosure built-in, and we’ll make sure the system is appropriately identified."

Another example of this came to me on an episode of Marketplace Tech with Molly Wood that discusses Microsoft's purchase of a company called Semantic Machines which works on something called "conversational AI." That is their term for computers that sound and respond like humans.

This is meant to be used with digital assistants like Microsoft's Cortana, Apple's Siri, Amazon's Alexa or Bixby on Samsung. In a demo played on the podcast, the humans on the other end of the calls made by the AI assistant did not know they were talking to a computer.

Do we need a "Turing Test in Reverse?" Something that tells us that we are talking to a machine? In that case, a failed Turing test result is what we would want to tell us that we are dealing with a machine and not a human.

To really grasp the power of this kind of AI assistant, take a look/listen to this excerpt from the Google IO keynote where you hear Duplex make two appointments. It is impressively scary.

Things like Google Duplex is not meant to replace humans but to carry out very specific tasks that Google calls "closed domains." It won't be your online therapist, but it will book a table at that restaurant or maybe not mind being on the phone for 22 minutes of "hold" to deal with motor vehicles.

The demo voice does not sound like a computer or Siri or most of the computer voices we have become accustomed to hearing.

But is there an "uncanny valley" for machine voices as there is for humanoid robots and animation? That valley is where things get too close to human and we are in the "creepy treehouse in the uncanny valley."

I imagine some businesses would be very excited about using these AI assistants to answer basic service, support and reservation calls. Would you be okay in knowing that when you call to make that dentist appointment that you will be talking to a computer?

The research continues. Google Duplex uses a recurrent neural network (RNN) which is beyond my tech knowledge base, but this seems to be the way ahead for machine learning, language modeling and speech recognition.

Not having to spend a bunch of hours each week on the phone doing fairly simple tasks seems like a good thing. But if AI assistant HAL refuses to open the pod bay doors, I'm going to panic.

Will this technology be misused? Absolutely. That always happens, no matter how much testing we do. Should we move foraward with the research? Well, no one is asking for my approval, but I say yes.

Designers humanize virtual assistants with names - Siri, Cortana and Alexa - and sometimes we might forget that we are not talking to a person. In

Designers humanize virtual assistants with names - Siri, Cortana and Alexa - and sometimes we might forget that we are not talking to a person. In

The word multiplicity actually makes me think of a comedy film with Michael Keaton. In that

The word multiplicity actually makes me think of a comedy film with Michael Keaton. In that